I've been thinking a lot recently about how organisations should actually deploy LLM-based agents into real workflows. Not the demo. Not the proof of concept. The thing that runs in production, touches systems of record, and has consequences when it gets things wrong.

And I've arrived at a position that I suspect will be unpopular with the "move fast and prompt things" crowd: if you're deploying AI agents into enterprise workflows without an explicit finite state machine governing their behaviour, you're building a system that will fail in ways you can't predict, can't audit, and can't explain to a regulator.

Let me walk you through how I got here.

Key Takeaways

- There are only two architectures for deploying LLM agents into workflows: a constrained agent inside a finite state machine, or a free agent with tools and prompts

- Free-agent designs demo beautifully but are architecturally bankrupt for anything with real-world consequences — they always evolve toward an FSM, by accident if not by design

- An explicit FSM gives you auditability, composability, and the ability to put probabilistic reasoning exactly where it adds value and deterministic control exactly where you need guarantees

- The real design challenge is fuzzy guards — gate decisions that require judgement. Solve them by having an LLM evaluate the condition and return a categorical decision the FSM consumes deterministically

- The skeleton is deterministic. The intelligence within and between the bones is probabilistic. Get that relationship the right way round and you have something that scales in production

The Two Architectures

When you strip away the marketing language, there are really only two architectural patterns for deploying LLM-based agents into workflows:

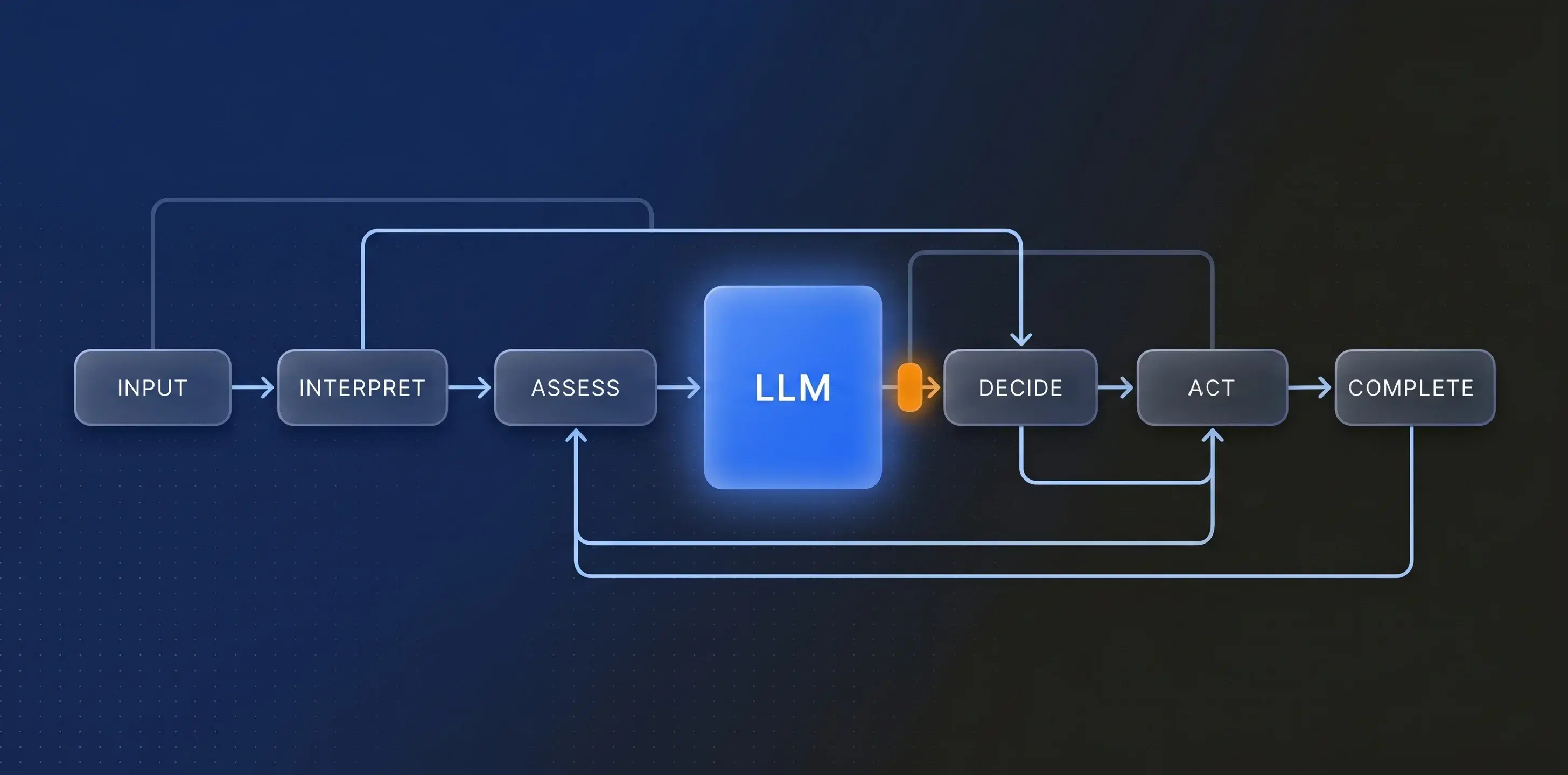

Pattern A: The Constrained Agent. You define your workflow as a finite state machine (FSM) — states, transitions, and guards. Within each state, an LLM agent can operate with full probabilistic flexibility: reasoning, generating, interpreting, judging. But the transitions between states are governed by deterministic guards. The agent is powerful, but it operates within an envelope. An orchestrator manages the workflow; it accesses probabilistic agents or deterministic functions depending on what each state requires.

Pattern B: The Free Agent. You give the LLM orchestrator the full set of available tools and functions, and let it decide what to call, in what order, based on its interpretation of the task. Deterministic functions are available as tools, but the LLM decides when and whether to invoke them.

Pattern B is what most people build first. It's seductive. It demos beautifully. You type a natural language instruction, the agent figures out what needs to happen, calls the right APIs, and produces a result. It feels like magic.

It is also, I'd argue, a dead end for anything that matters.

The Precondition Problem

Here's the thing about deterministic functions in an enterprise context: they have preconditions. A purchase order can't be approved before it exists. A compliance check can't execute before the data it checks has been assembled and validated. An API call to your ERP expects specific fields in specific states. A safety-critical instruction in an operational environment requires prior authorisation steps to have been completed and recorded.

An LLM can learn these preconditions from its prompt context. You can describe them in a system prompt. You can give it examples. And it will get the ordering right most of the time.

Most of the time.

So what happens in practice? Teams building Pattern B systems discover the precondition problem through painful experience, and they start adding validation logic around every function call. "Check that X is true before calling Y." "Verify state Z before proceeding." "If this precondition isn't met, ask the user / retry / abort."

They build, in effect, an ad-hoc, informally-specified, poorly-documented finite state machine — in prompt engineering and guard code.

Why Starting With Structure Wins

An explicit FSM gives you properties that are very hard to retrofit:

Auditability. You can inspect the state machine definition and determine, without running anything, what the legal transitions are. You can answer the question "could the system ever have reached state X without passing through state Y?" by looking at the graph. In regulated industries — finance, healthcare, rail, energy — this isn't a nice-to-have. It's table stakes.

Composability. If your states and transitions are well-defined, you can compose workflows from sub-workflows with clean interfaces at the boundaries. You can build a library of reusable workflow components. You can version them, test them independently, and swap them without destabilising the whole. Try doing that with a prompt chain.

Appropriate allocation of uncertainty. This is the key insight: the FSM lets you put probabilistic reasoning exactly where it adds value, and deterministic control exactly where you need guarantees.

Start with the entry-level requirement: within any given state, the activities that occur can be both deterministic and probabilistic. A deterministic function validates a data format; an LLM agent interprets an ambiguous input, generates a summary, or classifies a document. Both happen inside the same state. The FSM doesn't care — it governs the transitions between states, not the work that happens within them. This is the baseline. Any hybrid architecture needs to support this as a minimum.

The transitions between states are governed by guards that enforce ordering, preconditions, and business rules. In the simple case, those guards are deterministic: "has the manager approved?" "is the balance sufficient?" Flexibility within the state. Determinism at the boundaries.

But there's an upper bar — and that's where it gets genuinely interesting.

The Upper Bar: Fuzzy Guards

The genuinely challenging design problem in this architecture is: what happens when the guards themselves require judgement?

In a simple workflow, guards are boolean predicates on well-structured data. "Has the document been signed?" "Is the payment cleared?"

But in the workflows where LLM agents add the most value, some guards are inherently fuzzy. "Is this risk assessment sufficiently thorough to proceed to review?" "Does this incident report contain enough detail to escalate?" "Is this supplier response substantively compliant with our requirements?"

If you've ever sat through a lifecycle gateway review, you already know this problem intimately. Lifecycle gateway governance is the classic fuzzy guard — and it's everywhere. Product development lifecycles. Capital expenditure approvals. Asset acquisition and disposal. Infrastructure programmes. Any process where something of value progresses through governed phases, each separated by a formal gate at which someone has to decide: is this fit to proceed?

The workflow structure is deterministic — concept, feasibility, design, build, test, deploy, each gate clearly defined. But the decision at each gate? That's expert judgement. "Is this design fit for purpose?" "Does the business case still hold?" "Are the residual risks acceptable?" "Is the evidence of compliance sufficient to proceed?" These aren't boolean predicates. They're the considered opinions of experienced people, synthesised from incomplete information, weighed against standards that require interpretation. Every organisation that manages products, assets, or capital programmes lives with this tension daily.

Organisations have been managing this tension — deterministic workflow, probabilistic gate decisions — for decades, long before anyone mentioned AI. What's changed is that LLMs can now participate in that gate decision, not as a replacement for expert judgement but as a means of accelerating it: assembling the evidence, highlighting gaps, checking submissions against criteria, surfacing inconsistencies that a human reviewer might miss under time pressure.

This is where the architecture gets genuinely interesting. The pattern I've landed on: guards that are themselves LLM-evaluated, but whose outputs are reduced to simple, categorical decisions that the workflow can act on.

The LLM assesses a fuzzy condition and returns a categorical decision — proceed, escalate, reject, request more information. The FSM consumes that categorical output as a normal deterministic guard. The uncertainty lives inside the guard evaluation; the workflow structure remains deterministic. In a gateway review, the LLM might assess a design submission against a standard and return "compliant," "non-compliant," or "further evidence required" — a categorical output that the FSM treats like any other guard, while the reasoning behind the assessment is logged and available for the human reviewer who owns the final decision.

This matters because you can now separately audit, test, tune, and even swap out the guard evaluations without touching the workflow topology. You can A/B test different LLMs or different prompts for a specific guard while the rest of the workflow remains stable. You can log the LLM's reasoning for the guard decision alongside the workflow audit trail, giving you explainability at exactly the point where judgement was exercised — which, not coincidentally, is exactly the point where regulators and auditors will ask their hardest questions.

The Meta-Orchestrator Trap

One more pitfall worth flagging. There's a temptation — and I've seen this in several enterprise AI platform designs — to build a "meta-orchestrator": an LLM that sits above the FSM layer and decides which workflow to instantiate, or which path through a configurable workflow to take.

This can work, but recognise what you're doing: you're reintroducing Pattern B at a higher level of abstraction. If the meta-orchestrator is making consequential decisions about which workflow applies to a given situation, those decisions themselves need guard rails. You need an FSM of FSMs, or at minimum, a classification step with well-defined categories and explicit fallback behaviour.

The recursion has to bottom out somewhere in determinism. If it doesn't, you've just moved the "most of the time" problem up a level.

Where This Leaves Us

I think the industry is going to converge on Pattern A — not because it's fashionable, but because it's the only architecture that provides the guarantees enterprises actually need. The organisations that get there first, by design rather than by painful iteration, will have a significant structural advantage.

The real innovation isn't in the LLM. The models are increasingly commodity. The real innovation is in the workflow architecture that lets you deploy those models into consequential enterprise processes with the right combination of flexibility and control.

I'm curious where others have landed on this. Are you building Pattern A or Pattern B? Have you hit the precondition problem? How are you handling fuzzy guards? I'd genuinely like to hear from people who are deploying LLM agents into workflows where getting it wrong has real consequences.

Drop your thoughts in the comments.

Postscript: None of This Is New

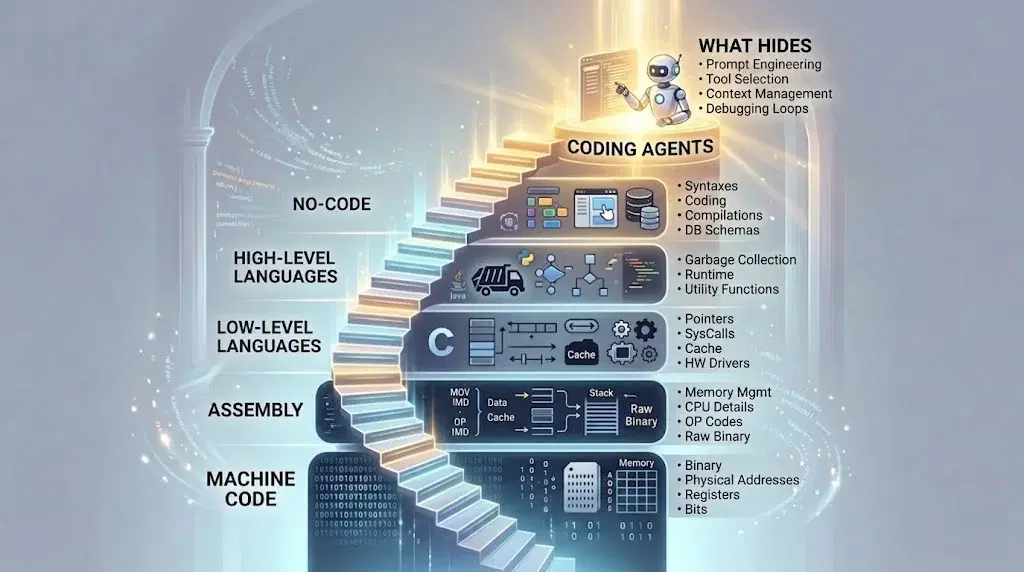

A final thought, and perhaps the most important one: none of this is new. Even without AI in the picture, developing a finite state machine has always provided a robust architectural foundation.

We recently developed a defect management system for safety-critical infrastructure — no LLMs involved, just a complex domain with high-consequence decisions and a lot of painful edge cases. The FSM provided a stable framework that prevented unnecessary states and transitions. It boiled the control framework down to discrete transitions and states, while still supporting the discretionary activity and decision-making that the process needed. The edge cases that had previously made the process fragile weren't eliminated — they were contained, given clear boundaries, and made manageable.

The lesson I took from that experience: if a finite state machine is what you need to make a human-driven workflow reliable and auditable, why would you expect to need less structure when you hand parts of that workflow to a probabilistic model?

You wouldn't. You'd need more.