Key Takeaways

- Every prior abstraction in programming — from assembly to no-code — kept the same quiet promise: same input, same output, every time

- AI coding agents are the first abstraction layer that breaks that promise. Determinism is replaced by sampling

- Once the layer below rolls dice, the habits that software engineering was built on stop working: reproducibility, testing, debugging, and trust all change shape

- No single approach to using AI agents fits all the work. Vibe coding, spec-driven design, agent teams, and inline autocomplete each have a sweet spot — and a zone where they quietly fail

- The job is no longer writing code that works. It's building systems — guardrails, conventions, governance scripts, stable APIs, humans in the loop — that produce working code reliably, despite the randomness underneath

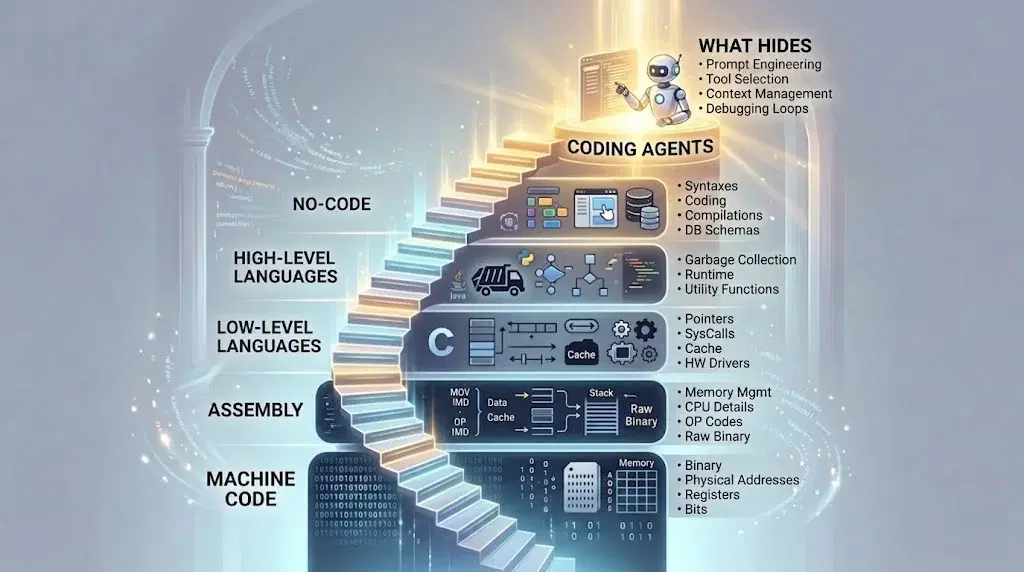

The ladder we've been climbing for decades

Programming started at the bottom of a ladder. I started a few rungs up.

At the bottom was machine code — raw binary on punch cards. The programmer thought in the machine's language: addresses, opcodes, bits. One misplaced card meant starting over. The machine was the boss. You fed it.

Assembly raised the floor. Instructions got names a human could read. The bits were still there — the assembler hid them. You wrote MOV instead of 10110000. The first layer of forgetting.

Pascal raised it further. I came in here. Variables, loops, structured blocks — concepts that didn't exist on the chip but felt natural in your head. The compiler turned them into machine instructions on your behalf. I stopped thinking about registers entirely.

C let me forget more, while reaching further. I could touch files, memory, the network — but through clean abstractions, not raw syscalls. The operating system stopped being something I addressed and became something I asked.

Java let me forget the machine altogether. Object orientation gave me a way to think in the shape of the problem, not the shape of the hardware. Classes, inheritance, interfaces. I stopped writing procedures and started modelling the world. The runtime handled the rest.

Then libraries and SDKs took over the next layer up. You stopped writing HTTP clients — you imported one. You stopped writing UI loops — the framework gave you one. Whole domains that used to be projects became single function calls. The mechanical work moved into other people's code, packaged and ready.

Then no-code platforms, where you could drag boxes onto a canvas without typing at all. Even the syntax was gone.

Each step was the same shape. Each new layer hid the one below it. Each new layer let me say more with less. And each new layer let more people build software — because what they had to know shrank, every time.

And then, decades into this, something different happened. AI arrived.

I described what I wanted in plain English. It wrote the code. Not a snippet — a working program. After punch cards, after registers, after all the semicolons and brackets and build systems, just words.

It felt like the top of the ladder. The last step.

It isn't a step at all. It's a transformation — from deterministic to probabilistic.The new layer breaks a rule the entire ladder was built on — a rule so obvious that nobody ever said it out loud. And breaking that rule changes everything.

The quiet promise every previous layer kept

Assembly compiles to machine code the same way every time. C compiles to assembly the same way every time. Python runs the same way every time. SQL returns the same rows. Terraform builds the same cloud setup.

This is so obvious that we rarely say it. But it's the thing that made programming work at all.

Everything we built on top of that contract quietly depends on it. Version control tracks diffs because diffs are stable. Tests verify behavior because behavior repeats. A bug counts as a bug because it reproduces. Code review is meaningful because the code you approve is the code that runs. The whole culture of software engineering rests on "run it again and it does the same thing."

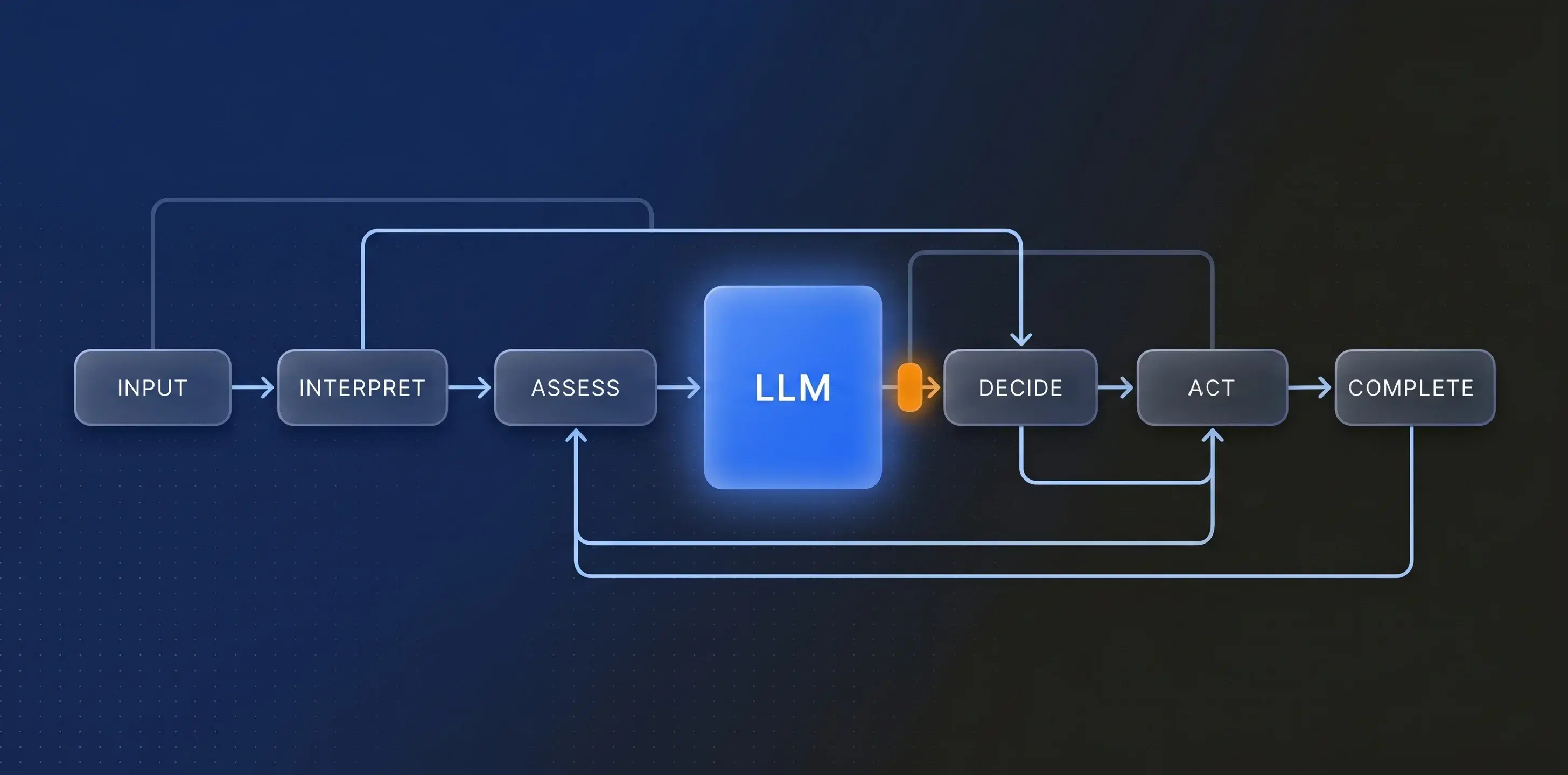

The new layer does not do that

Ask an AI agent to build a function today. Ask it to build the same function tomorrow. You will get different code. The shape might be similar, but the names, the structure, the edge cases it handles — those will shift.

Ask it to fix a bug. It finds one fix today. It finds a different fix tomorrow. Sometimes it doesn't find the bug at all.

This isn't a flaw waiting to be engineered away. It's how the layer works. Sampling is the mechanism. The model explores a space of plausible outputs and picks one. Different picks, every run.

That's a fundamentally different kind of abstraction than anything we've built on before.

What we lose when determinism goes

Once the layer below you rolls dice, a lot of habits stop working.

Reproducibility is gone. "It worked on my machine" used to be a joke about environment differences. Now it's a literal statement about the same prompt producing different code on different runs.

Testing gets harder. A test passing once doesn't mean the system is correct — it means this particular generated version passed. The next version might fail the same test. You're not testing code anymore. You're sampling from a distribution of possible code.

Debugging gets strange. When something goes wrong, was the spec bad, or did the agent just roll poorly this time? You can't always tell. And if you regenerate and it works, you might never find out why the first try didn't.

Trust changes shape. You used to trust the compiler. You don't trust an AI agent the same way. You trust the process around it — the tests, the reviews, the retries — not the agent itself.

We tried to tame it

At Graphshare, we spent the last few years trying to use this new layer.

First was Copilot. Inline autocomplete that sometimes wrote a whole function before you'd finished naming it. Brilliant half the time, subtly wrong the other half. It felt like pair-programming with someone very fast and slightly careless.

Then ChatGPT. It quietly replaced Stack Overflow. Ask a question, get code, paste it in, tweak it, move on. Faster than Googling — but still manual. We were shuttling text between windows.

Then vibe coding. Describe what you want in prose and let the agent build it end to end. It felt like magic, especially for greenfield projects. But the moment we pointed it at a real codebase with real constraints, the magic cracked. Small bug fixes confused it. Tight integrations confused it. Anything that needed surgical precision — confused it.

So we tried spec-driven design. Write a tight spec first, let the agent implement it. Better for structured work. But the spec had to be airtight or the agent drifted. And writing airtight specs for a small change often took longer than just making the change ourselves.

Then AI agent teams — one agent plans, another writes, another reviews, another tests. This felt like running an actual engineering team inside a single terminal. Strong on deep features. But expensive. Slow. Wildly overkill for a one-line fix.

After all of this, a pattern became impossible to ignore.

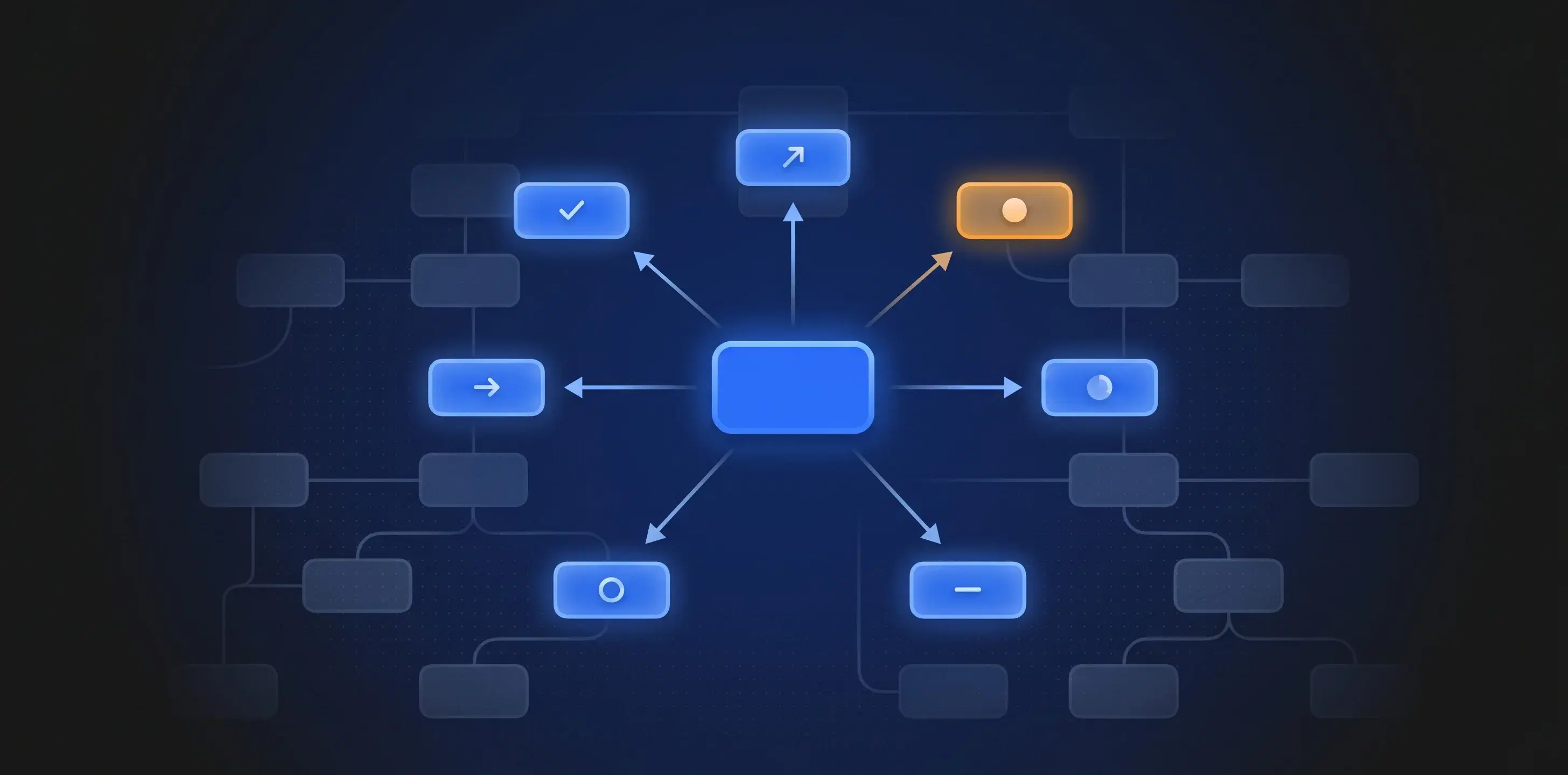

What we actually need is a mixture. A system that picks the right approach for the task in front of it. Vibe coding when scaffolding. Spec-driven when the shape is known. Agent teams when the work is deep. Plain autocomplete when you're just typing.

We use our own agent harness framework — not a single agent, but a layered system that picks the right approach for the task in front of it. The probabilistic layer keeps shifting underneath us — new models, new failure modes, new things that worked yesterday and don't today. So the framework keeps evolving too. We rebuild parts of it every few months as we learn what actually holds up. The ideas below are what we lean on today, none of them magical, all of them subject to change.

Guardrails keep agents inside known-good territory. They block dangerous actions before they happen — destructive commands, unverified network calls, edits outside the task scope.

Rules and conventions narrow the agent's choices. Instead of letting the model pick any of a thousand plausible patterns, we tell it which patterns we use here. Naming, structure, error handling, testing. The agent stops re-inventing and starts conforming.

Governance scripts check the work after the fact. Lint, type-check, run tests, scan for secrets, verify the change matches the intent. If something is off, the script catches it before a human does.

Stable APIs give the agent well-typed surfaces to interact with. The fewer ad-hoc calls, the fewer ways to go wrong. Every action passes through a contract.

Humans in the loop stay decisive on anything that matters. Architecture choices, sensitive code paths, customer-facing changes — those don't get rubber-stamped by an agent. The harness flags them and waits.

None of these alone tames the probabilistic layer. Together, they shape it. The agent still rolls dice — but most rolls land inside a narrow, safe range.

Closing thought

The shift from deterministic to probabilistic isn't going to reverse. AI is the layer we work on now, and it will keep changing under us. The job is no longer writing code that works — it's building systems that produce working code, reliably, despite the randomness underneath.

At Graphshare, we keep researching and building for this new craft — shaping probabilistic systems into something dependable. The direction is clear, and the work is just beginning.